ai that undresses women’s photos surge in popularity; women are unsafe

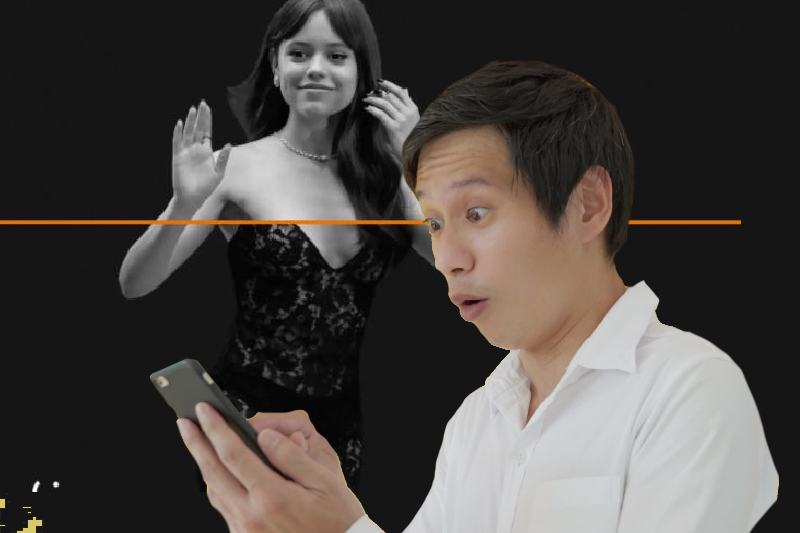

Apps that use artificial intelligence (AI) to undress people in photos are increasing in popularity. This can be harmful for women. An increase in the popularity of apps and websites using AI to undress women in photos has caught the attention of people across the world.

What could go wrong? Are women safe? Can you post your photos on social media? What can be the consequence? Is AI a threat to privacy?

These apps manipulate existing pictures and videos of people and make them appear nude without their consent. This is a threat to women across the world.

The dark side of AI

Many apps that use artificial intelligence to undress people only work on women. They instantly make the photos nude. The images are typically sourced from social media platforms without the knowledge of women. The apps erase their clothes and make them appear completely nude. Sometimes, you can’t differentiate between real images and fake images.

Keep Reading

A recent study, conducted by the social media analytics firm Graphika, analyzed 34 companies offering the service of undressing people’s images. They called it non-consensual intimate imagery (NCII).

These websites received a whopping 24 million unique visits in September alone. The availability of open-source AI image diffusion models has simplified the process of making a girl nude.

What did the researchers say?

The researchers said, “Bolstered by these AI services, synthetic NCII providers now operate as a fully-fledged online industry.” Nude photos are shared on the web without women’s consent.

Now, it is harder to tell if the images are authentic or fabricated. There is no federal law that specifically bans the creation of deep fake pornography.

In response to this disturbing trend of nudifying women, companies like TikTok and Meta Platforms Inc. have initiated steps to block keywords associated with these undressing apps.

In June, the FBI (Federal Bureau of Investigation) issued a warning about an increase in the manipulation of photos of women and minors to create explicit content.

There is an urgent need for stringent measures to safeguard the privacy of women.